Hey friends,

A few days ago, I had a slightly uncomfortable thought. What happens when AI gets so good… you can’t tell what’s real anymore?

Not in a futuristic, sci-fi way. In a very practical, everyday way. A video from a colleague. A message from a client. A report from a trusted firm.

At what point do we stop believing what we see? That question sat with me all week. And this conversation brought it into sharp focus.

The Moment AI Crossed The Line

We’ve been talking about AI video for a while now. It’s always been “impressive.” But now it’s different.

With tools like Sora 2, you can take a single image of yourself and generate entire videos. Not just talking head videos, but full scenes, different camera angles, storytelling, movement, emotion.

You can create films, explainers, animations. You can place yourself in situations you’ve never been in.

And it looks real. Very real. Not “interesting experiment” real.

“Wait… is that actually real?” real. That’s the shift.

This isn’t just a creative tool anymore. It’s a perception tool. And once perception becomes editable, everything else starts to move with it.

Where This Gets Uncomfortable

The obvious use case is storytelling. Content creation becomes accessible to anyone with imagination. That’s exciting.

But the other side of this is harder to ignore. If I can generate a video of myself saying anything… so can someone else. If I can create a realistic scenario… so can someone with bad intent.

We’re already seeing scams evolve from text messages to voice. The next step is video. Which means a simple rule is emerging that most people aren’t ready for:

Don’t trust what you see. Verify it. Even with people you know.

It sounds extreme. But we’re already there.

The Illusion Of Safeguards

Platforms are trying. Watermarks, metadata, ownership controls, collaboration permissions. All good steps.

But here’s the reality. If one platform adds safeguards, another removes them. If one system tags AI content, another strips those tags out.

The technology is moving faster than the controls around it. And governments, as always, are playing catch-up.

So the responsibility shifts. From systems… to people.

The Real Risk For Businesses

Most of the conversation around AI focuses on efficiency. Faster content. Cheaper production. Scalable output.

All true.

But I think we’re underestimating something much more fragile.

Trust.

There was a recent case where a major consultancy was paid around $440,000 by the government to produce a report. The report was generated using AI. And no one properly checked it. It included incorrect citations. Fabricated references. Basic errors that should never have made it through.

This isn’t an AI problem. This is a human oversight problem.

If you’re going to use AI to accelerate work, your responsibility to validate it increases, not decreases. Otherwise, what are you actually being paid for?

The Mistake I Keep Seeing

There’s a pattern emerging across organisations. Buy the tool first. Train the people later. It sounds small. It isn’t.

I was speaking with a law firm recently. They had just rolled out ChatGPT Enterprise and planned to train the team the following week.

I told them to stop. Training comes first. Because one untrained person using AI for a single day can damage your brand more than the tool can help it.

We’ve already seen this play out in large organisations. Rolling out AI too fast. Removing people too early. Fixing the damage after the fact.

Fail fast works in product development. It doesn’t work when trust is on the line.

How To Think About AI Instead

The simplest way to approach this is something I’ve said many times.

Treat AI like an intern. Give it tasks. Review everything. Train it over time. Build trust gradually.

But never assume it’s right just because it sounds confident. Because it will sound confident… even when it’s wrong.

What This Means For You

We’re entering a phase where two things are true at the same time.

AI is becoming one of the most powerful tools we’ve ever had. And it’s also becoming one of the easiest ways to lose trust.

The businesses that win won’t just be the fastest adopters. They’ll be the ones who:

Use AI to enhance their capability Train their people properly Keep humans in the loop And most importantly, protect their credibility at all costs

Because in a world full of generated content…Being real becomes your biggest advantage.

A Quick Personal Note

On a related note, I wanted to personally invite you to something that means a lot to me.

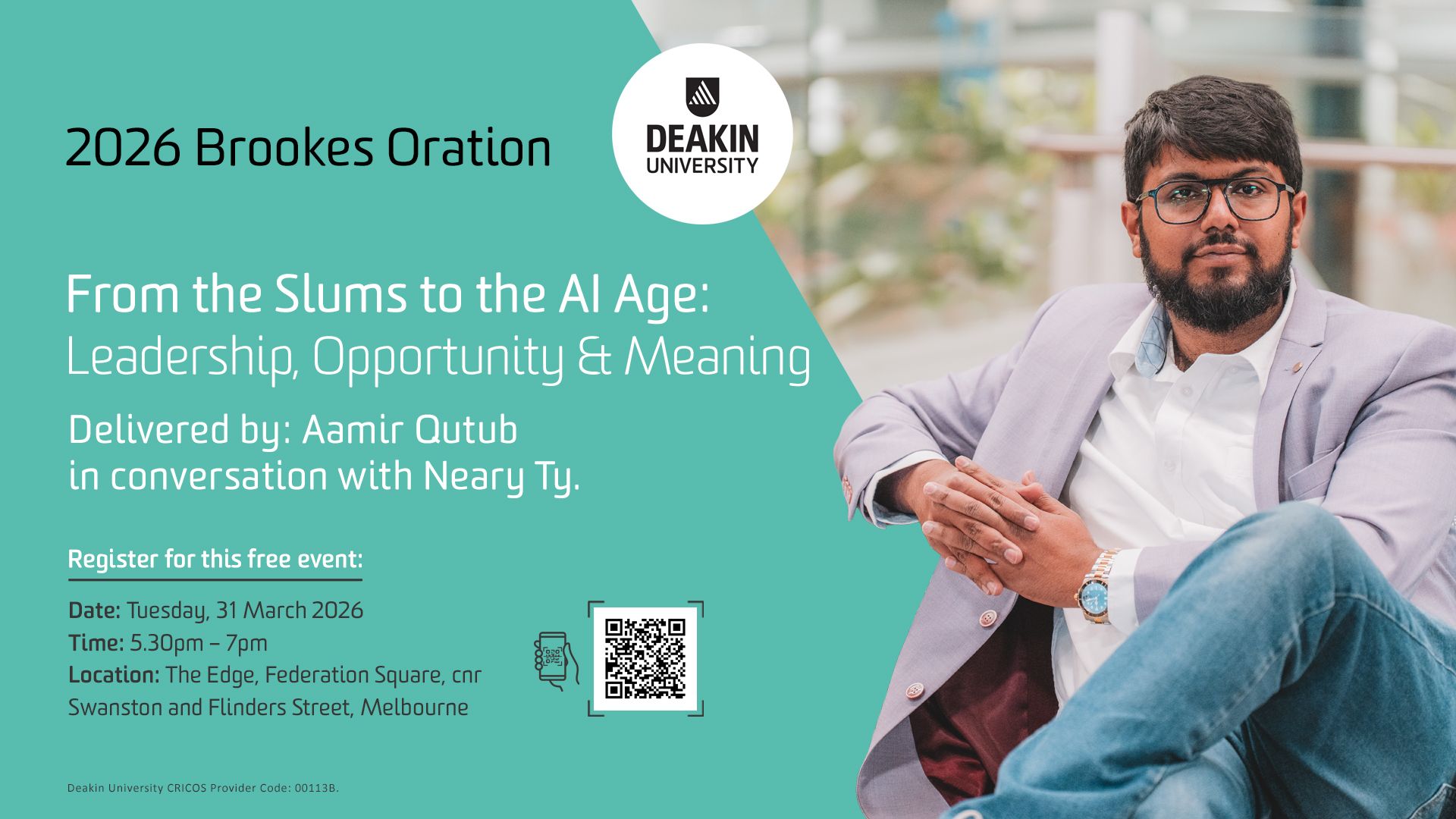

I’ll be speaking at the 2026 Deakin Brookes Oration.

The talk is called:

From the Slums to the AI Age: Leadership, Opportunity & Meaning

Deakin has been part of my journey for years, so being invited back for this is genuinely special. But more importantly, it’s a chance to have a deeper conversation about where all of this is heading.

We’ll get into:

What the future of business actually looks like in an AI-driven world Whether AI replaces people, or makes human judgement more important The real risks and ethical challenges, and where people are already getting caught out What the workplace looks like when AI agents work alongside teams And what you should be doing in the next 6 to 365 days to stay ahead

It won’t be technical. It won’t be polished theory. Just a practical, honest perspective on what’s changing and how to navigate it without losing your footing.

📍 The Edge, Federation Square, Melbourne

📅 Tuesday, 31 March 2026

⏰ 5:30pm – 7:00pm

It’s free, and I’d genuinely love to see you there.

A Final Thought

Cars are dangerous. We still use them.

But we train people before handing over the keys.

AI is no different. The risk isn’t the tool. It’s how casually we’re starting to use it.

See you next week,

— Aamir

📲 Resources & Links

🎧 Listen to the Podcast Episode on: Spotify | Apple Podcasts | YouTube

📘 Book: The CEO Who Mocked AI (Until It Made Him Millions) by Aamir Qutub